Guest post by John, G3WGV

My first article on Folding@home for Club Loggers got some good feedback so I have decided to write another! Several of you have asked how it is possible to amass a score of 100 million credits in just a few weeks and today I will focus on the answer: Graphics cards.

Let’s talk about credits

Firstly, a bit more about what the scoring system means and how it works. Credits, as they are known, are allocated on the basis of two important factors: a base credit reflecting the scientific importance of the work unit (WU) and a bonus for returning the completed WU quickly.

It hugely benefits the science if results are available quickly, so bonuses can be considerable. This is because the folding project is effectively trying out vast numbers of different molecular folding arrangements. Most will result in a dead-end scientifically but eventually a promising fold architecture will be found. The important thing is to be able to quickly discard the dead ends so the science can focus on more promising candidates.

The practical upshot of all this is that returning completed WUs quickly is highly prized and is duly rewarded by big bonuses. The challenge, therefore, is to maximise WU throughput.

Graphics cards are better than CPUs

We tend not to think of graphics cards or Graphics Processing Units (GPUs) to use the modern terminology, as computer processing systems: they are just there to drive our screens, right? Yes, they are, but it turns out that the sort of processing needed to display stuff is exactly what the Folding@home project thrives on: dealing with vast numbers of parallel floating-point operations. Next, we have to grasp that it is possible to run software in the GPU: we are doing that all the time, of course, with the software that drives our screens, so it is a logical extension that we can run other software in the GPU as well. That is what Folding@home does.

A mid-range GPU will always significantly out-perform a fast CPU on folding work. And so, in pursuit of scientific research and Folding@home credits, our attention turns to GPUs.

Gaming GPUs are the answer

Some of you will be gamers, most of us probably are not – but we can thank the world of gamers for creating a consumer demand for ever more powerful GPUs. Gamers have an insatiable appetite for multiple high-resolution screens with outrageous refresh rates and the GPU industry has responded to that demand with ever more powerful GPUs.

Folders naturally want to know which GPUs are best, so a lot of work is done on the nominal “Points per Day” (PPD) capability as each new card comes along. This only tells part of the story, because the tests have to make various assumptions about WU allocation. It is nevertheless a good indicator of the relative performance of each GPU. You can find a very comprehensive list of GPUs and nominal PPDs here.

Some caveats

It is tempting to go for the most powerful GPU you can afford but, even if cost is no object, it isn’t necessarily the best option. Very powerful GPUs can sometimes be rather inefficient at dealing with smaller WUs and that results in lower PPD scores than you might otherwise expect. In the worst case the work unit may even be allocated elsewhere. Often two mid-range GPUs will outperform a single high-end GPU at much lower overall cost.

It’s important to note that not all GPUs are capable of folding. In particular, GPUs that are integrated into the motherboard are generally not suitable and most Apple computers are unable to fold in the GPU (the usual thing – Apple goes its own way). Generally, PCIe connected Nvidia cards work well and so, to a lesser extent do AMD cards. The vast majority of folding systems use Nvidia GPUs.

Some practical implementations

I asked some of the Club Log folders at the top end of the table what they were running.

One Club Logger is running a couple of Nvidia GTX 1070 GPUs. These are no longer made but they were very popular with gamers, so they crop up regularly second hand on eBay. His pair of 1070s is turning in around one million PPD. Another contributor uses a Nvidia GTX 1060 GPU and also folds using multiple CPUs to make around 500k PPD.

Several Club Loggers use multiple systems and one such has three computers running GTX 1080, GT 730 and GT 220 GPUs respectively to yield about 700k PPD. Another uses two systems with a GTX 1060 GPU in one and multiple CPUs to earn some 500k PPD. A bit more GPU oomph bags another Club Logger some 1.5 million PPD out of an RTX 2060 and GTX 1050 GPU combination, with the 2060 doing most of the heavy lifting.

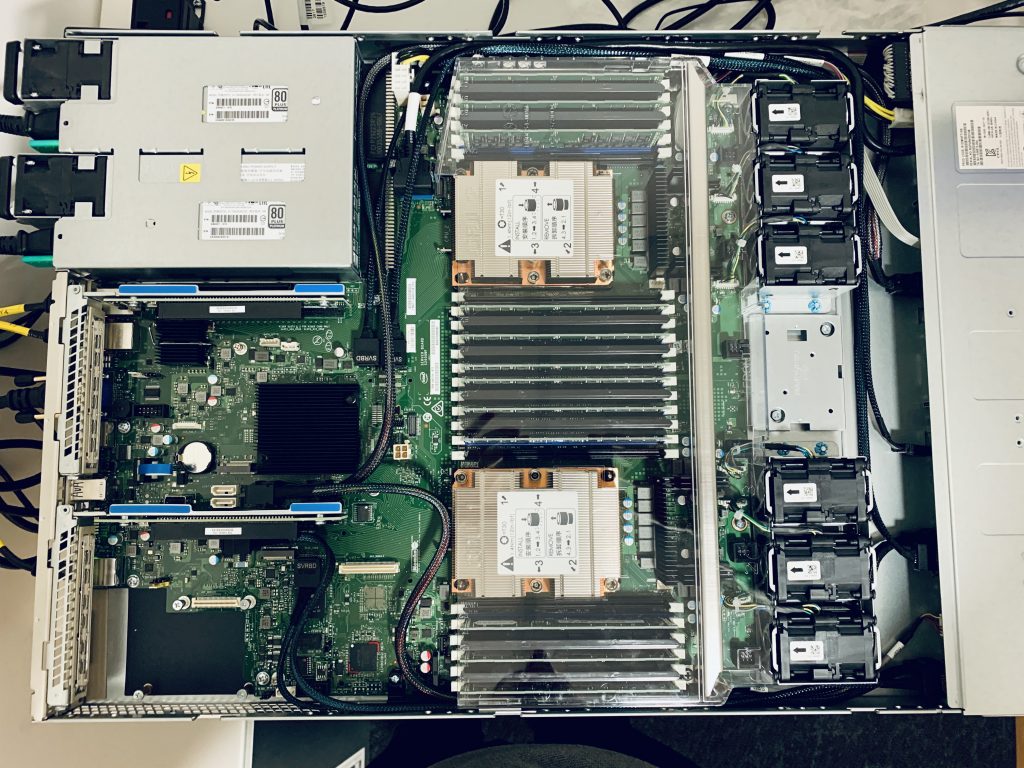

Our very own G7VJR is running a vast number of Club Log CPUs to yield around 900k PPD but for a while he was running a V100 GPU turning in about 4.5 million PPD until he had to let it get on with some proper, i.e. fee earning, work! The V100 is a curious beast: a GPU with no outputs for screens. In fact, the V100 is really a specialist floating point computer and it is only considered to be a GPU because of its architecture.

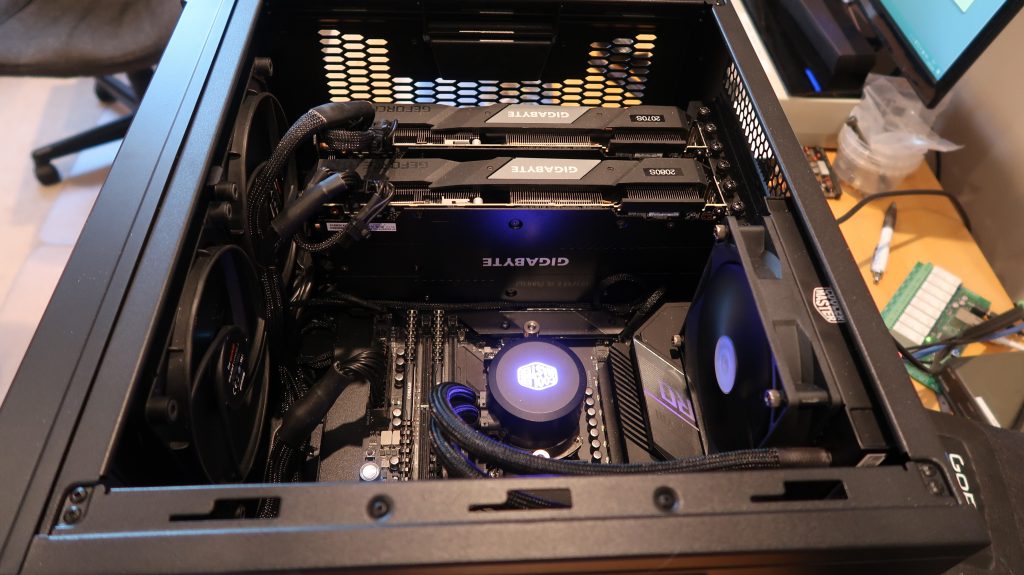

And what about G3WGV? Rather by accident I ended up with an RTX 2070 Super as the GPU for my new-build system. That was a good choice as it turns in about 1.5 million PPD. I planned to get another 2070S to double the throughput but the big increase in folders arising out of Covid-19 has resulted in them becoming very popular and none of my usual suppliers had stock. In the end I stepped up to an RTX 2080 Super which produces an additional two million PPD. Bringing up the rear is my old development machine with a GTX 1650 which manages a not to be sniffed at 300k PPD. These days I fold only on my GPUs as the additional PPD from all of my CPUs, despite being quite powerful, adds less than 10% to my PPD earnings capability.

Running 24×7

All these Club Loggers run their systems 24×7. This makes a huge difference to scores because WUs will always finish and in good time. Shutting down a computer in the middle of a WU is really bad for the science and therefore for the credits!

If you really must shut down your system, then plan ahead and set “Finish” in the advanced control app at the appropriate time. The current WU will complete and no new WUs will be requested. When it’s finished and the results uploaded then you can shut down and go to bed.

What GPU to get?

I suggest that you set a budget then see what that gets you by looking at the GPU chart. You can then review the budget down (or up if you’re feeling generous!) as needed to meet your folding aspirations. Do consider second-hand if your budget is tight: gamers always want the latest and greatest, so there is a regular supply of high-end GPUs coming onto the second user market.

For reasonably serious folding the RTX2070, especially the Super variant makes a very good case for itself in terms of PPD per buck. If you can stretch to the 2080 or even the 2080 Super then that offers more PPD, albeit at more cost, offering a similar PPD/dollar return on investment. Lower down the scale, the RTX2060 give a good account of itself and there is a slew of previous generation 1000 series, most of which are now out of production a bit further down the pecking order. Even the quite humble GTX1650 will turn in 300k PPD or so.

At the top end of the scale, without getting into specialist GPUs like the V100, is the RTX2080Ti, which will turn in getting on for 3 million PPD but it’s a $1200 card. Word on the street is that the 2080Ti can be a bit temperamental and awkward to keep cool. I think I would avoid. Get a couple of RTX2070 Supers instead, for about the same outlay.

Other things to consider

Check your expansion slot real estate! Nearly all of these mid-upper end GPUs are dual width, using up two expansion slots-worth of space and they are quite long too. Most ATX motherboards will only accommodate two GPUs and no other expansion cards. Modern GPUs are all based around PCIe x16, which any motherboard less than ten years old should support.

These top-end GPUs have a ferocious appetite for electricity and this can have a big effect on your utility bills! My 2070S/2080S combination runs at about 500W or 12kWh per day. You’ll also need a power supply unit that’s man enough for the job. Don’t scrimp on this, cheap power supplies won’t cut this sort of thing 24×7. You need at least a 600W unit for a single GPU and 850W if you’re running two. I use a Corsair RM850i and that works a treat.

Most mid-upper end GPUs take more power than the PCIe interface is capable of delivering. These GPUs require a separate PCIe power lead from the PSU. Most modern PSUs have these extra connections but, as this is a relatively recent requirement, older PSUs may not. Something to check!

Be careful about cooling. Most ready built consumer PCs do not have a good enough cooling spec for 24×7 operation, let alone 100% GPU utilisation. Keep a close eye on CPU and GPU temperatures using something like CPUid and add more cooling if temperatures start getting toasty. As a guide, 70°C is fine, 80°C is getting a bit too warm for comfort and 90°C is definitely too toasty.

I have found that a case that mounts the motherboard horizontally gives much better overall cooling because the GPU cards are vertical, so the heat rises away from the motherboard rather than up towards the CPU or second GPU. Unfortunately, horizontal mount cases, sometimes referred to as cubes, are relatively uncommon for some odd reason. I ended up with a CoolerMaster HAF XB EVO which does a great job. It seems to be out of production but I had no real difficulty finding a source on-line.

And finally

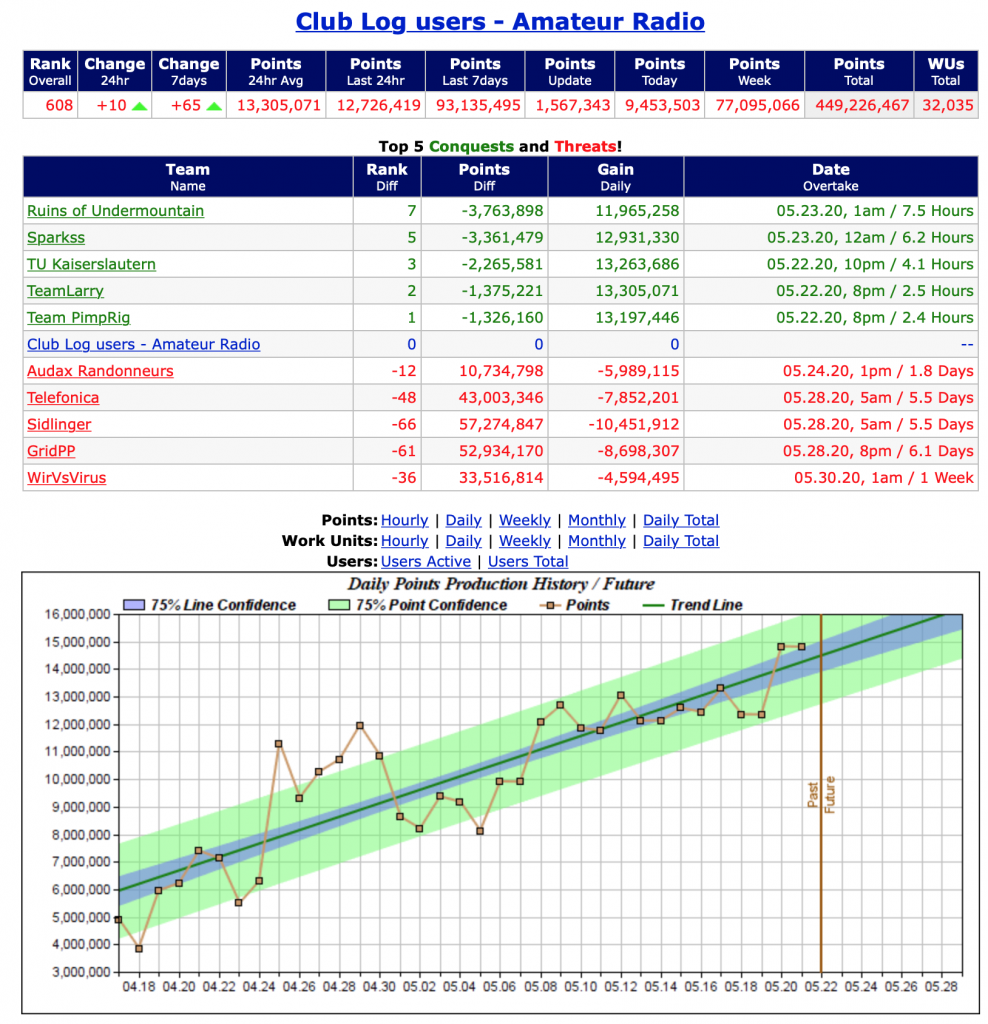

How is our Club Log team doing with all these GPUs folding away? Pretty well really! As we head towards 500 million points, we are steadily working our way up the league table as a major team contributor. Michael started this team just a few weeks ago and we are now in the top quarter of one percent of all teams world-wide.

Not bad. Not bad at all! Thank you for making a difference.

73, John, G3WGV

PS: Tell me what you think of this series and what you would like me to cover next.